A few years ago I showed how to use Microsoft’s Log Parser tool to take IIS log files and import into a SQL database.

A few years ago I showed how to use Microsoft’s Log Parser tool to take IIS log files and import into a SQL database.

From Microsoft: Log parser is a powerful, versatile tool that provides universal query access to text-based data such as log files, XML files and CSV files, as well as key data sources on the Windows® operating system such as the Event Log, the Registry, the file system, and Active Directory®. You tell Log Parser what information you need and how you want it processed. The results of your query can be custom-formatted in text based output, or they can be persisted to more specialty targets like SQL, SYSLOG, or a chart.

In short, using something like this to take IIS logs and dump into a new SQL table:

C:\Program Files (x86)\Log Parser 2.2>logparser “SELECT * INTO iisLogs FROM c:\temp\logs\*.log ” -i:iisw3c -o:SQL -server:localhost -database:webLogs -username:sa -password:yourpass -createTable: ON

But, if you’re importing tons of records, it might seem to take a while. BUT: you can use the option “transactionRowCount” to gain some performance. The transactionRowCount option determines how many rows are included in each transaction. By default, transactionRowCount is 1, so after every row, the transaction is committed. If you set it to “-1” it will include everything in 1 large transaction.

Test Results

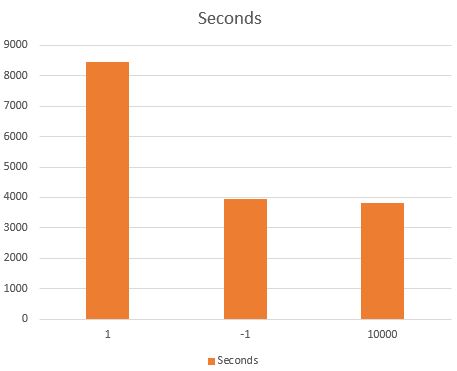

Below a did a few tests. My test included 36,000,000 rows.

Using the default (no need to supply the option):

Statistics: ----------- Elements processed: 36158727 Elements output: 36158727 Execution time: 8448.14 seconds (02:20:48.14)

What if we only use 1 entire transaction (“transactionRowCount:-1”)

Statistics: ----------- Elements processed: 36158727 Elements output: 36158727 Execution time: 3944.09 seconds (01:05:44.09)

If we use a transaction every 10,000 records (“transactionRowCount:10000”)

Statistics: ----------- Elements processed: 36158727 Elements output: 36158727 Execution time: 3818.74 seconds (01:03:38.74)

Results

In my case, the 10,000 value was just slightly faster than -1.

The usage of this option:

logparser "SELECT * INTO iisLogs4 FROM c:\logs\*.log" -i:iisw3c -o:SQL -server:localhost -database:iisLogs -transactionRowCount:10000 -createTable:ON

This wasn’t an extensive test by any means, but I figured it’s a good starting point for anyone looking to speed up their imports.

[…] an updated article, I discuss increasing import performance […]

LikeLike

I am trying to parse a 50MB log file using LogParser. It’s not a typical logfile that can be parsed with any of the logparser’s built-in input formats. It’s a programmatically generated WebLogic application log file.

logparser (without me specifying an input format, assumes “TEXTLINE”) and responds after 11 to 12 seconds for a query that searches for an id in the logfile.

With custom input format (-i:COM), it takes about 20 seconds for the same file (by using the same query).

Do you have any suggestions as to how the performance can be improved? Clearly, 20 or 12 seconds isn’t good.

LikeLike

I have found much the same. There’s an additional good reason for using -1 — if you put constraints on your input table (to ensure that you don’t load the same data twice), it can protect you from failures. If the execution fails partway, it won’t write the .lpc checkpoint file, but any interim commits will have already happened, so the next data load will try to re-insert them. Using one big transaction helps prevent that issue. Of course, you may need a lot of tempdb space for this, depending on how much you are loading at once!

LikeLike